After years struggling with tracking metrics for a disaster recovery program, the authors of this article came across a metric tracking system used by a peer which was successfully modified for DR use. The system is shared here to help you move from nagging to supporting the teams that you work with.

By Cullen Case Jr., CBCP and Stephen Wolters.

Metrics to show efficacy and return on investment are not new. There have been surges and dips in the intensity of the push for implementing these depending on what industry you are in. It may be surprising to many that cities and counties have excellent metrics to track and report on the work they do. Look up your local Comprehensive Financial Report and I suspect you will be surprised the number of metrics that are tracked for any given city or county. That doesn’t help though when you can’t track number of widgets produced, rejection rates, miles of road plowed for snow, emergency medical calls per staff member, and the list goes on. For business continuity and disaster recovery types like us, the metrics are a little softer. And, more often than not, you are beholden to someone else’s effort and the competing priorities to get their attention so that their task is accomplished and therefore your metric is in good standing. It really, really, can be frustrating.

Having worked in emergency management, business continuity, and disaster recovery for over two decades combined I’ve always taken the perspective that this is our lot in life. We ask, nag, cajole, and rely on our personal relationships to get the teams we work with to get their tasks done. And from what my friends in the profession say, this is the norm. For years we have looked for a good metric to track to show our effectiveness, and each time in the end we scrapped the metric since we were reliant on someone else’s effort. Then I saw a tracking board that a peer used for his work that required other teams’ support to get the work done. It was mind blowing; I could immediately grasp the vision of how to flip the script: from us being rated on other people’s work, to us tracking their efforts to ensure their systems were resilient for the disaster recovery program.

This changed our relationship from the nag spending hours reaching out to check on the status and get a new ETA; to being the helper that others sought out to figure out what they needed to do to get their system listed as passing. The examples and descriptions here will be for a disaster recovery program, but can just as easily be implemented for a business continuity focus or emergency management focus…. or most any documented program. I say documented because you have to have defined what is expected of others related to the program you are tracking.

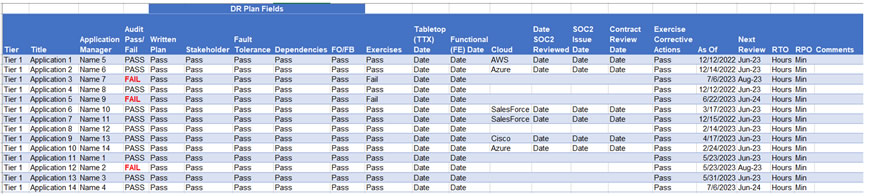

For our disaster recovery program we have documented that each critical application must have a DR plan (with standardized sections including assigned roles, storage, network, and failover and failback information) filled out, a tabletop exercise, a functional failover exercise, RPO, RTO, etc… The DR plan is our single source of truth and we treat it with a trust but verify mentality, in that the application manager or DR planner updates the information and we review some, all, or none depending on our past experiences with that team and our ability to review.

These fields from the DR plan then populate in a pass/fail state in the Disaster Recovery Scorecard (DRSC), resulting in a view like this (modified to protect the innocent):

*Some of the fields were removed for simplicity and readability. Click the image to see a larger version.

We realize this is not perfect by any means, however it is light years ahead of our past efforts and we have found that the teams we work with are much more readily available and keenly interested in updating their information to make sure that their application is listed in a passing state. Of enormous assistance to this desire is that the VP of Infrastructure reviews the DRSC periodically and about once a month shows it to the IT leadership team, which includes the CIO. However, even before this increased visibility there was significant interest to make sure that that all failing fields were remedied; so as with any program having a champion and governing body make a difference.

Depending on program maturity you will need to have the following, not all but as much of it as possible (items with an ** are a must):

- Program champion,

- Program governing body/committee,

- **Established expectations such as service level agreements or policy for readiness of systems,

- Single source of truth; this reduces your duplication of effort and need to go to multiple resources to gather the information,

- **SharePoint, shared drive, intranet, or other means of publicizing the results where all can see; have no secrets,

- Repercussionless (yes this is a made-up word); this is about improving the resiliency of the program not about punishing people or making examples of them,

- Flip the script; make sure to change the interaction from the team constantly nagging for updates to being the team there to help get applications to a passing state (you are there to help).

With the above steps in place any program can have an effective metric based review process and you can move from the dreaded position of the nag to the lauded position of the subject matter expert that this a resource to help.

The authors

By Cullen Case Jr., CBCP and Stephen Wolters. Contact Cullen at https://www.linkedin.com/in/ccasejr/